For our CS 419 - Introduction to Machine Learning course project in Spring 2018, Arpan Banerjee, Srivatsan Sridhar, and I tackled the problem of Voice Conversion – making one person’s voice sound like another’s. Think of it as the world’s most elaborate impression act, but with neural networks doing the impersonation.

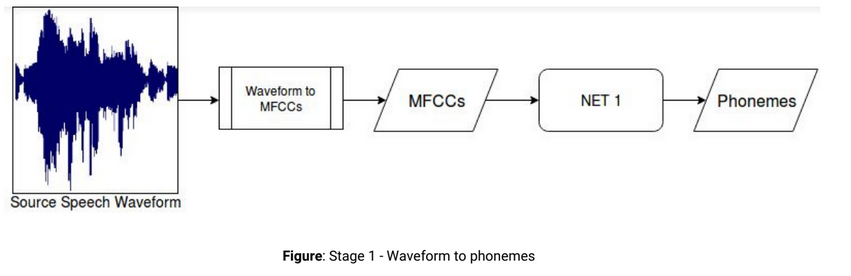

We built a pipelined approach using deep learning to convert source waveforms to phonemes, and then phonemes to target waveforms. Two neural networks in sequence. The first network converted the source speaker’s waveforms to phonemes.

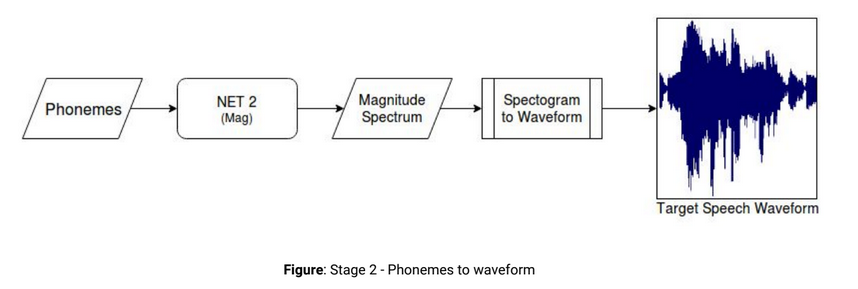

While the second network involved converting the obtained phonemes to the target speaker’s waveforms.

We used the TIMIT corpus (various sources) and CMU ARCTIC corpus (single target) for training. As part of the project, we performed extensive experimentation with bidirectional Recurrent Neural Networks using LSTM and GRU cells.

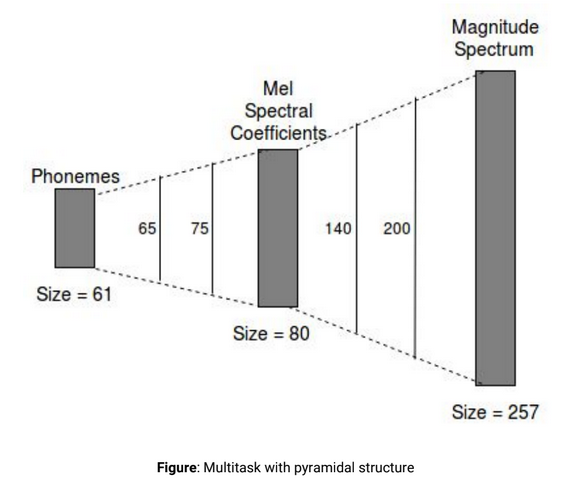

We used a multitask approach to train the network on both mel spectral coefficients (as an intermediate representation) and magnitude spectrum (the final output), employing a pyramidal network architecture.

Our experiments and approaches are detailed here- Report